Redesign & New Functionalities: Quality Control Mobile App

Redesign and enhance the Quality Control Mobile App with new features for improved usability and efficiency.

Role

UX/UI Designer

Industry

Wholesale

Team

Cross-functional

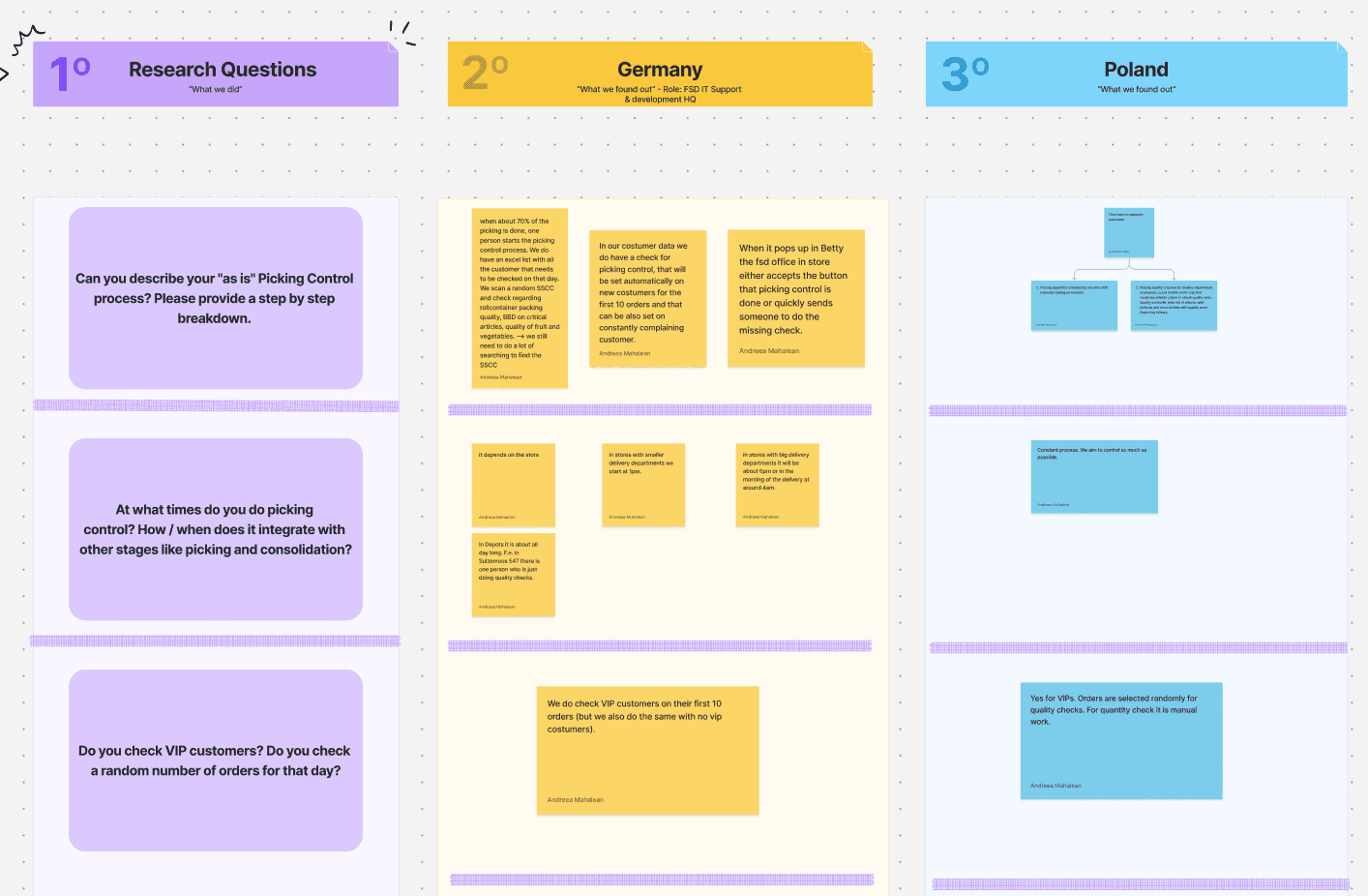

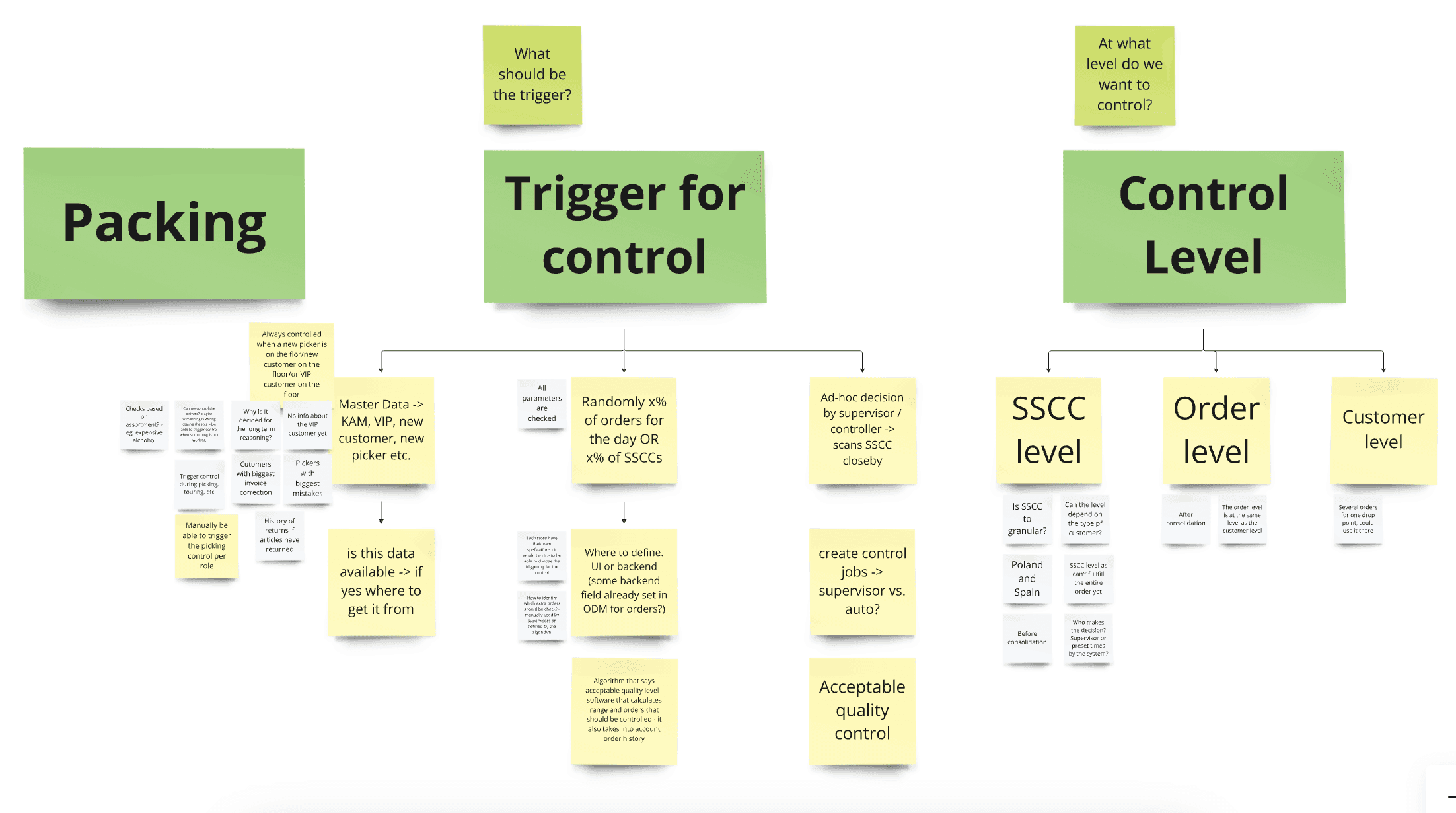

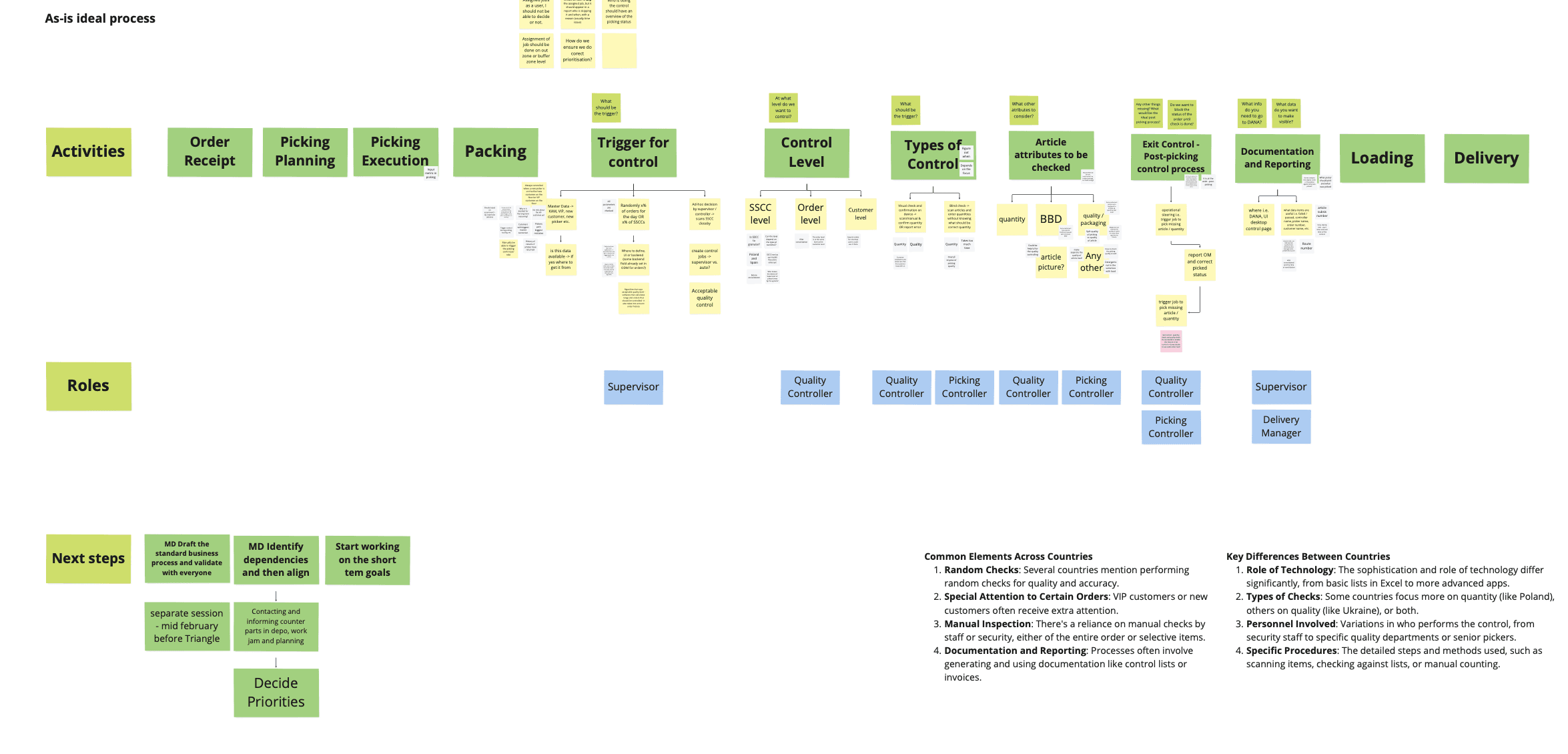

Jobs to be done

I included JTBD after the research and before usability testing to make sure my design solutions are based on real user needs. This way, the tool becomes more effective and user-friendly.

Problem definition

Small picking mistakes led to an 18% return rate, with 58% caused by errors. This cost METRO trust, €45M in lost sales, and rising operational inefficiencies. Beyond finances, it impacted customers' loyalty and warehouse teams’ efficiency, highlighting the urgent need for change.

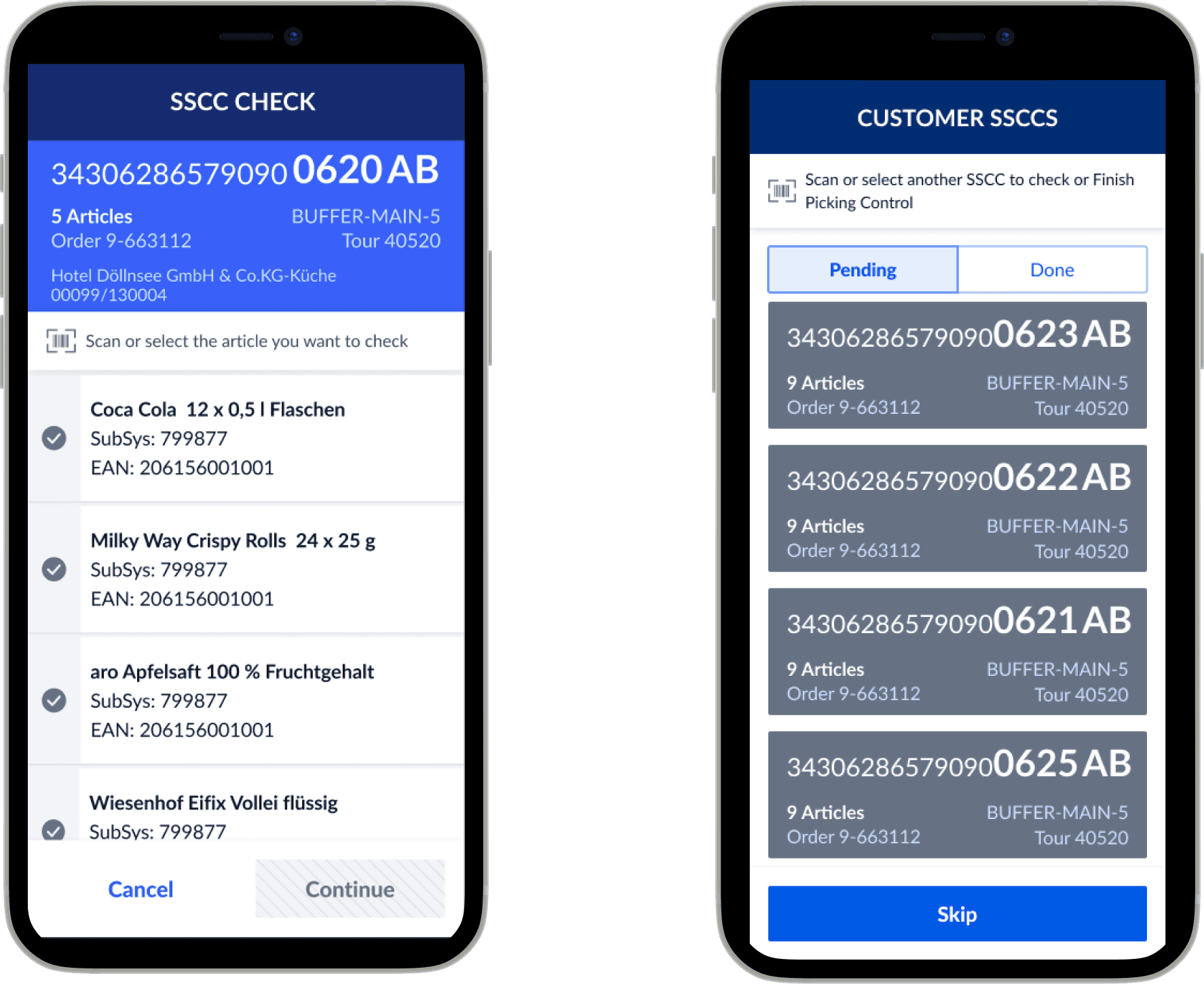

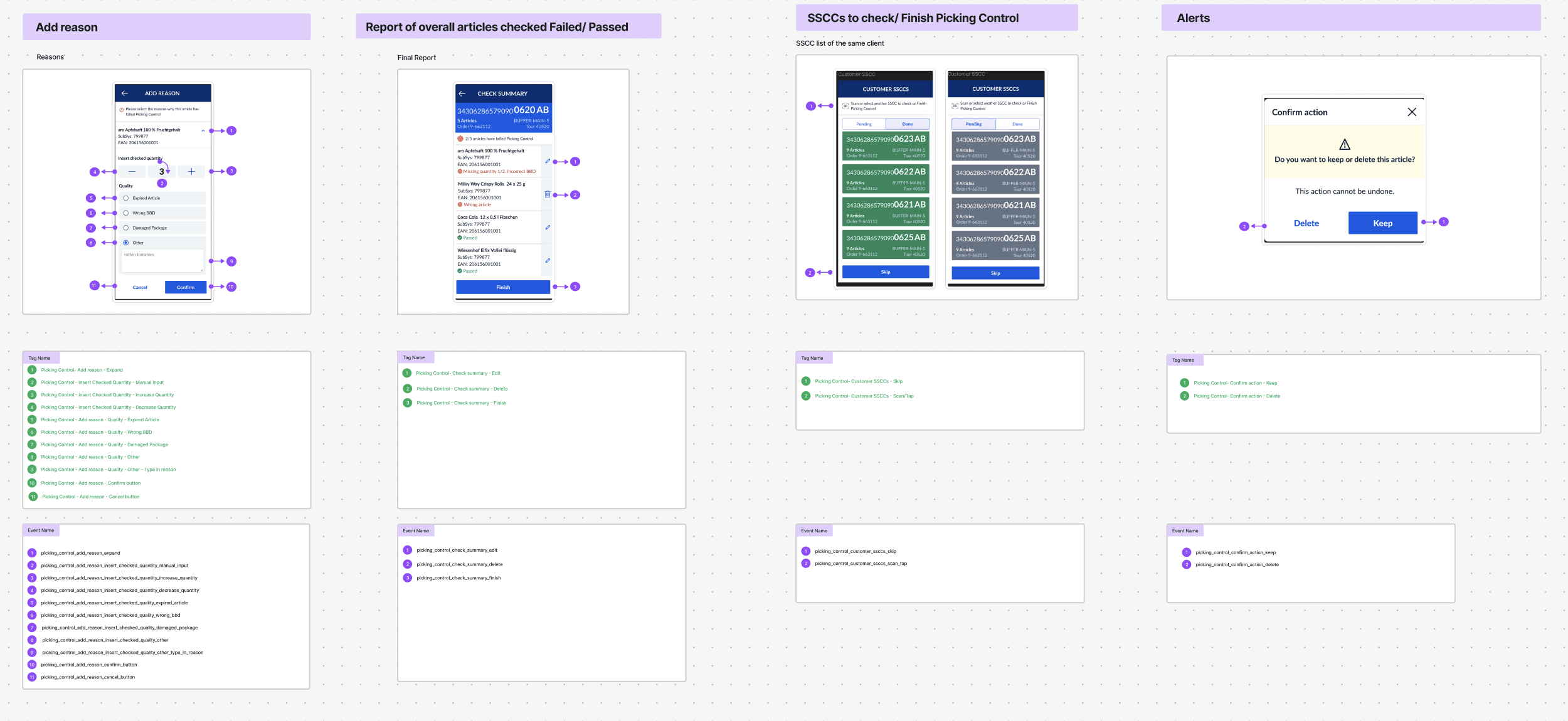

Moderated Usability Testing

Developed a test plan and documented task execution results. Usability testing sessions took place in Dusseldorf (Germany) and Warsaw (Poland), involving 12 participants. The prototype designed in Figma was tested on both a Zebra device and an iPhone.

Pilot in Poland and Germany part 1

The first iteration of the enhanced Picking Control tool was tested in two key markets: Poland and Germany. These countries were chosen as pilot locations due to their operational scale and diverse use cases, making them ideal environments to assess the tool’s impact.

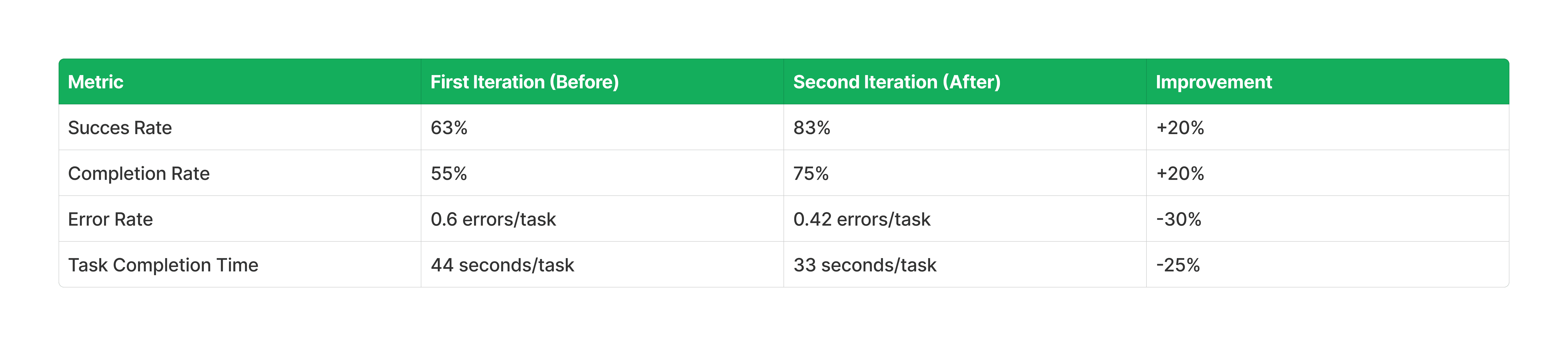

Conclusions after the first Usability Testing

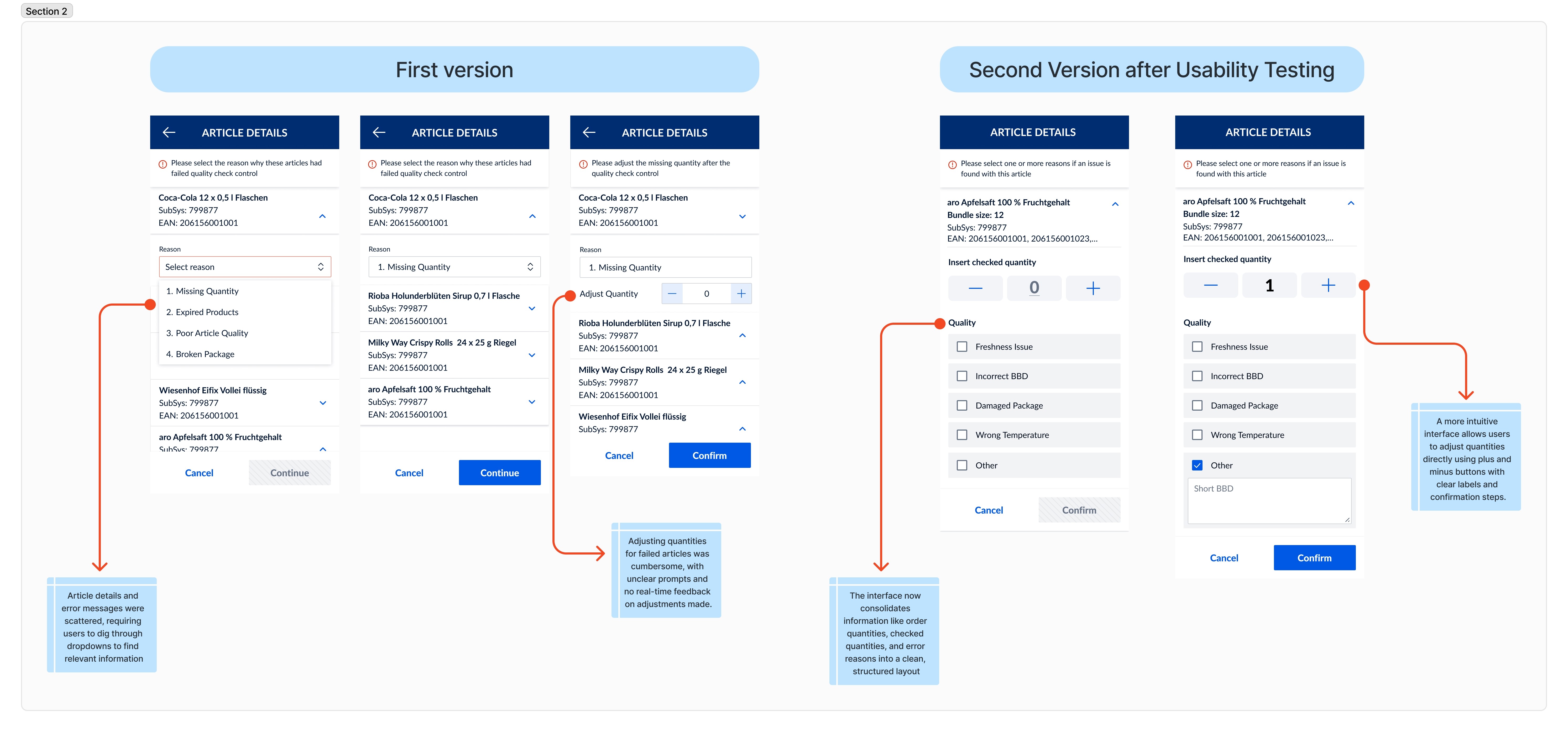

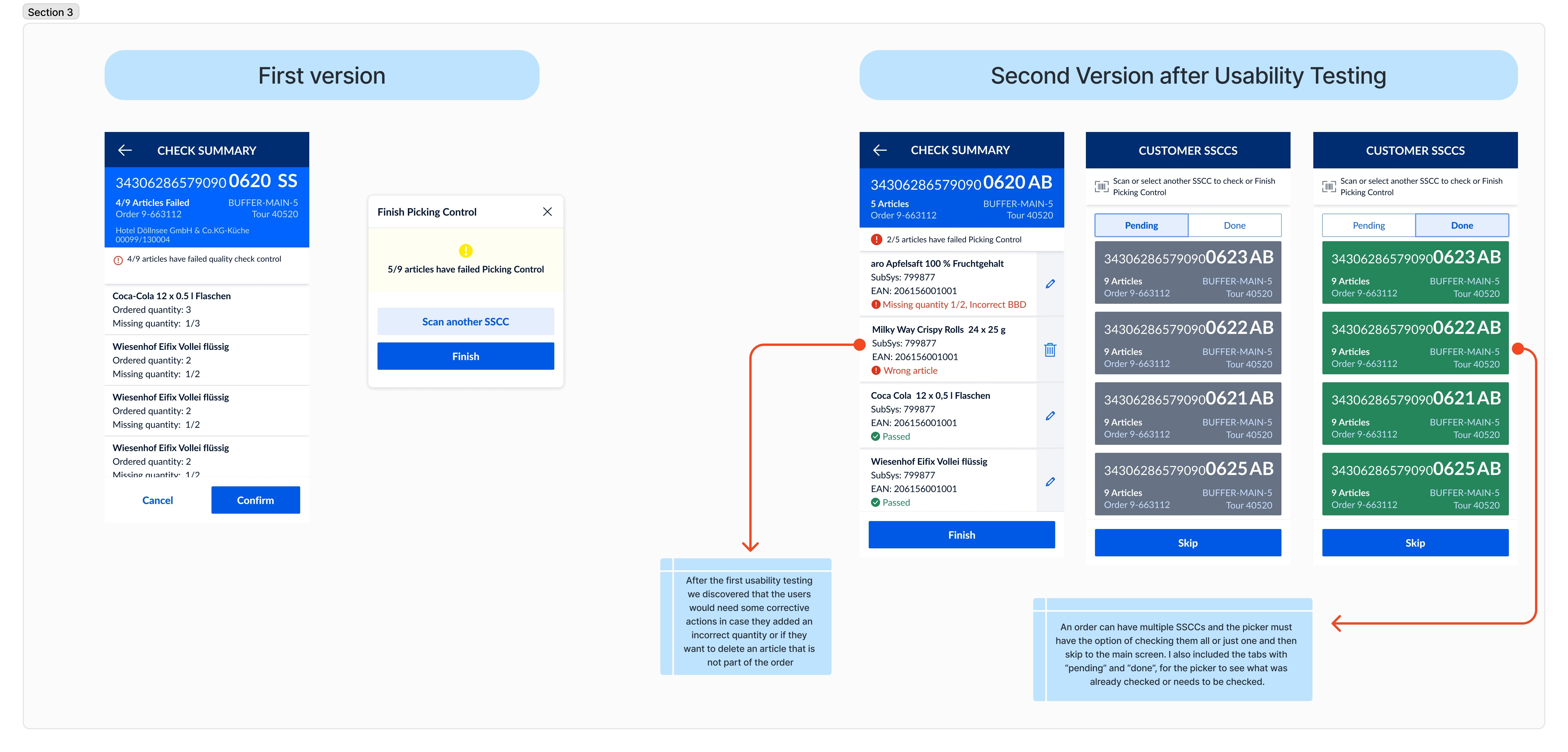

The results of the first usability testing highlighted several critical areas requiring improvement to enhance the effectiveness and user-friendliness of the Picking Control tool. While the initial version provided a functional baseline, the testing revealed significant gaps that hindered user performance and satisfaction:

Key Findings:

Low Success and Completion Rates:

Only 63% of users successfully completed tasks, and 55% of participants finished all assigned tasks. These numbers pointed to challenges in navigation, unclear workflows, and insufficient guidance during error resolution.

High Error Rate:

An average error rate of 0.6 errors per task indicated confusion during key processes such as quantity adjustments, identifying reasons for failed articles, and navigating between tasks. This error rate underscored the need for more intuitive interfaces and better feedback mechanisms.

Slow Task Completion Time:

A median task completion time of 44 seconds suggested inefficiencies in the workflow and overly complex steps, particularly for resolving errors and confirming adjustments.

Pilot in Poland and Germany part 2

After conducting another round of usability testing with the enhanced prototype in the same two countries, Poland and Germany, I gathered valuable insights. This second round of testing allowed me to evaluate the impact of the improvements made after the first iteration, revealing how the adjustments addressed previous challenges while uncovering new areas for further refinement.

Improvements and their impact

The improvements to the Picking Control tool after usability testing not only addressed user pain points but also streamlined workflows, reduced error rates, and increased user confidence in the system. These changes are expected to have a direct impact on operational efficiency, picker performance, and overall customer satisfaction.

Other projects

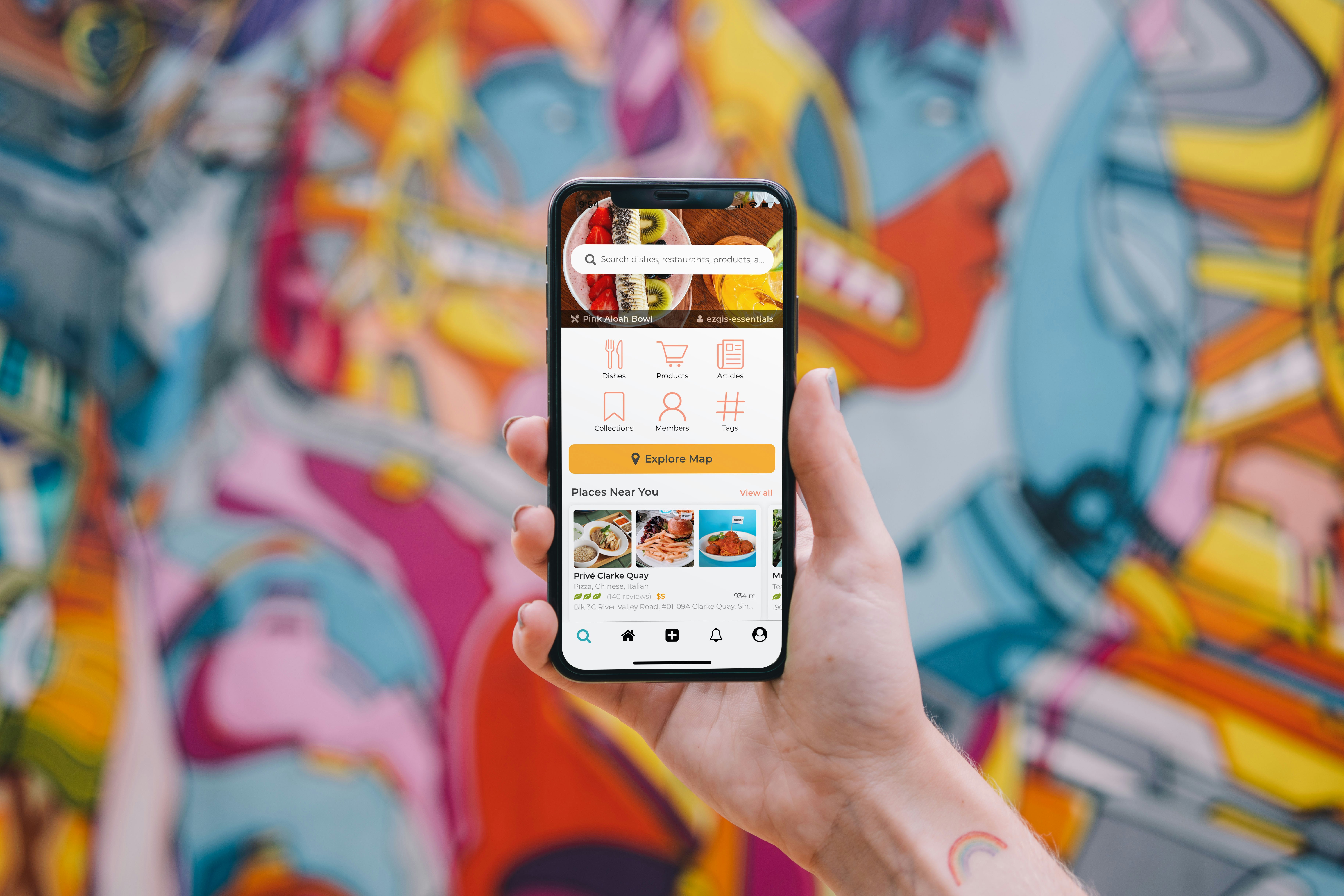

Smart Ordering App for All-You-Can-Eat Restaurants

Work in progress

Accounting Web App: Company Profile Interface

Designing a web app that streamlines accounting by allowing users to easily add new companies, input key details, attach important files, and generate insightful reports—from concept to prototype.

Picking Dashboard - Web App

This new tool provides complete oversight, improving workflow visibility and operational efficiency.

Landing Pages

Website for F1 enthusiasts who can purchase re-selled F1 items.